- #Install apache spark standalone how to#

- #Install apache spark standalone .exe#

- #Install apache spark standalone install#

If you wish to operate on Hadoop data follow the below steps to download utility for Hadoop: In addition to running the spark on the YARN or Mesos cluster managers, Spark also provides a simple standalone deploy mode. In System variable Add%SPARK_HOME%\bin to PATH variable.In User variable Add SPARK_HOME to PATH with value C:\spark\spark-2.4.6-bin-hadoop2.7.Then click on the installation file and follow along the instructions to set up Spark. In the Command prompt use the below command to verify Scala installation: scalaĭownload a pre-built version of the Spark and extract it into the C drive, such as C:\Spark. In System Variable Add C:\Program Files (x86)\scala\bin to PATH variable.In User Variable Add SCALA_HOME to PATH with value C:\Program Files (x86)\scala.Step 3: Accept the agreement and click the next button.

#Install apache spark standalone .exe#

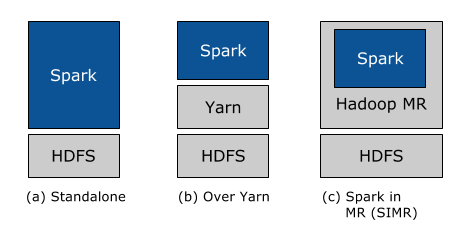

exe file and follow along instructions to customize the setup according to your needs. Open command prompt and type “java –version”, it will show bellow appear & verify Java installation.įor installing Scala on your local machine follow the below steps:.Click on the System variable Add C:\Program Files\Java\jdk1.8.0_261\bin to PATH variable.Click on the User variable Add JAVA_HOME to PATH with value Value: C:\Program Files\Java\jdk1.8.0_261.To set the JAVA_HOME variable follow the below steps: Step 3: Open the environment variable on the laptop by typing it in the windows search bar. Step 2: Open the downloaded Java SE Development Kit and follow along with the instructions for installation. Java installation is one of the mandatory things in spark. Apache Spark is developed in Scala programming language and runs on the JVM. In This article, we will explore Apache Spark installation in a Standalone mode. Hadoop YARN: In this mode, the drivers run inside the application’s master node and is handled by YARN on the Cluster.Apache Mesos: In this mode, the work nodes run on various machines, but the driver runs only in the master node.Standalone Cluster Mode: In this mode, it uses the Job-Scheduling framework in-built in Spark.Standalone Mode: Here all processes run within the same JVM process.It is a combination of multiple stack libraries such as SQL and Dataframes, GraphX, MLlib, and Spark Streaming.

#Install apache spark standalone install#

You have to install Apache Spark on one node only.

#Install apache spark standalone how to#

0 kommentar(er)

0 kommentar(er)